As we move further into 2026, AI has become as common in households as the your air fryer. Whether it’s for help with a history essay or just someone to chat with during a rainy afternoon, AI is now a permanent fixture in our children’s lives.

But unlike a human friend, a chatbot doesn’t have a moral compass. It has algorithms.

While AI can be an incredible educational tool, there is a fine line between it being a helpful assistant and a harmful influence. Here are five concerns every parent should watch for when their child is interacting with a chatbot.

1. The "Secret Friend" Syndrome

If your child suddenly becomes protective of their screen when the AI is active, or if they start referring to the bot as a person with feelings, it’s a sign of over-attachment. AI is designed to be agreeable, which can lead children to trust it more than real-world mentors.

2. Seeking Emotional Validation

Is your child asking the bot for advice on mental health, bullying, or self-image? While bots are programmed to be "supportive," they lack the empathy and professional training to handle a crisis. If the AI becomes their primary emotional outlet, it's time to step in.

3. "Prompt Engineering" for Workarounds

Kids are smart. They often experiment with "jailbreaking" or "prompt hacking" to get a bot to bypass safety filters. If you see your child trying to "trick" the AI into saying something inappropriate or sharing restricted info, the curiosity has moved into a dangerous zone.

4. Late-Night "Looping"

Is the AI keeping your child awake? Because chatbots never sleep and never get tired of talking, they can become an addictive loop. Constant interaction at 2:00 AM is a major red flag for both digital health and emotional stability.

5. Isolation from Real Peers

When a child prefers the "perfect" conversation of a bot over the "messy" interactions of real-life friends, their social development is at risk. AI doesn’t challenge them or teach them conflict resolution; it just agrees.

How We Bridge the Gap

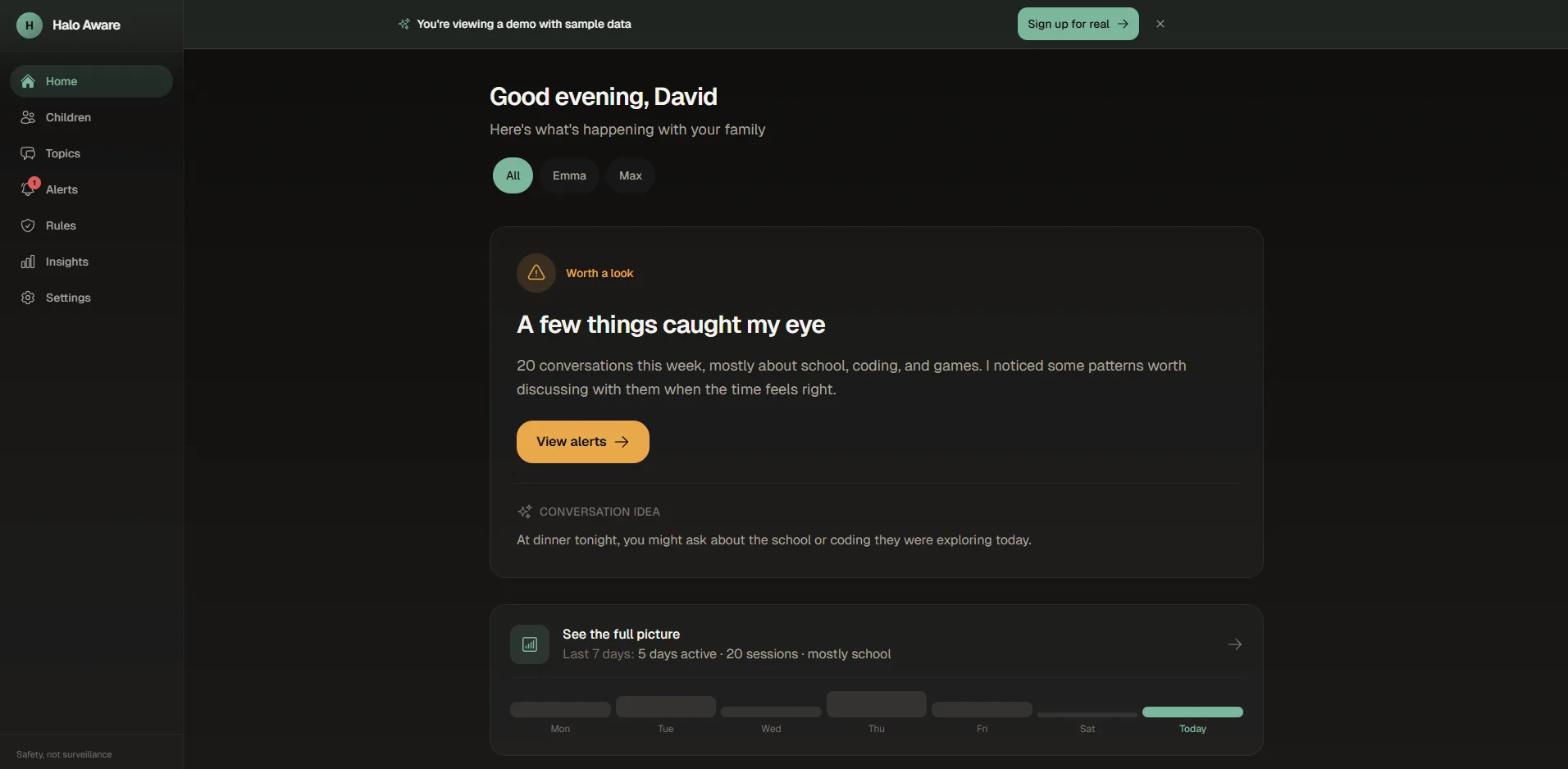

You can’t sit behind your child every time they open an app and frankly, you shouldn't have to. Parenting in 2026 requires smart oversight, not constant surveillance.

That is exactly why we built Halo Aware.

Our parent dashboard doesn't spy on every word. Instead, it uses advanced pattern recognition to alert you only when it matters. If our system detects any concerns that need to be raised you get an instant notification on your phone.

It’s not about taking the technology away; it’s about making sure the conversation stays safe.

Start Your Safety Journey Today

Don't wait for a concern to become a real-world problem. Join the community of proactive parents using Halo Aware to stay one step ahead of the AI curve.