At Halo Aware, we believe that the best way to protect children in the age of AI isn't to take the tools away, but to provide a safety net that works in the background.

ChatGPT and other generative AI tools have become our child’s assistant or their therapist. However, because these platforms are built on open-ended language models, they don't always follow the safety boundaries parents expect. To help our community stay informed, we’ve identified five hidden risks that every parent should understand and how a proactive approach to monitoring can make the difference.

1. The "Fact" Illusion (Hallucinations)

ChatGPT doesn't "know" facts; it predicts the most likely next word in a sequence. This often leads to "hallucinations". = Confidently stated information that is entirely made up.

Your child relying on AI for schoolwork might unknowingly submit "hallucinated" facts. More seriously, they may receive inaccurate advice on health or safety.

The Halo Aware Perspective: While kids should be encouraged to double-check AI, it’s vital to know if they are treating the bot as an absolute authority on sensitive subjects.

2. The Illusion of Connection

AI is designed to be conversational and empathetic in tone. This can lead children to anthropomorphize the bot, viewing it as a friend rather than a set of code.

When a child feels they are talking to a friend, they are more likely to share personal vulnerabilities, secrets, or mental health struggles that should be discussed with a parent or professional.

The Halo Aware Perspective: This is where traditional "site blocking" fails. You don't want to read every chat, but you do need to know if the conversation shifts from "help me with math" to "I'm feeling lonely."

3. Circumventing Safety Filters

Most AI companies have "guardrails" to prevent inappropriate content, but "jailbreaking" (using specific prompts to bypass filters) is a growing trend among tech-savvy students.

A child may accidentally (or intentionally) bypass safety settings to access content that isn't age-appropriate.

The Halo Aware Perspective: It is impossible to watch a child’s screen 24/7. Monitoring should be alert-based, identifying the intent of the conversation rather than just the platform being used.

4. Subtle Algorithmic Bias

AI models are trained on the vast, unfiltered internet, which means they can inadvertently mirror human biases or stereotypes.

Children are highly impressionable. If a chatbot consistently provides biased perspectives on culture, gender, or history, it can subtly shape a child’s worldview without a parent ever realising it.

5. Persistent Data Footprints

In 2026, AI "memory" is a standard feature. These tools are designed to remember user preferences to be more helpful.

Over time, an AI can piece together a surprisingly detailed profile of a child—their school, their friends, and their daily routine, based on small snippets of conversation.

Moving From "Monitoring" to "Mentoring"

At Halo Aware, we know that most parents don't want to invade their child’s privacy by reading every mundane chat about homework or video games. That "hovering" approach can actually break the trust between parent and child.

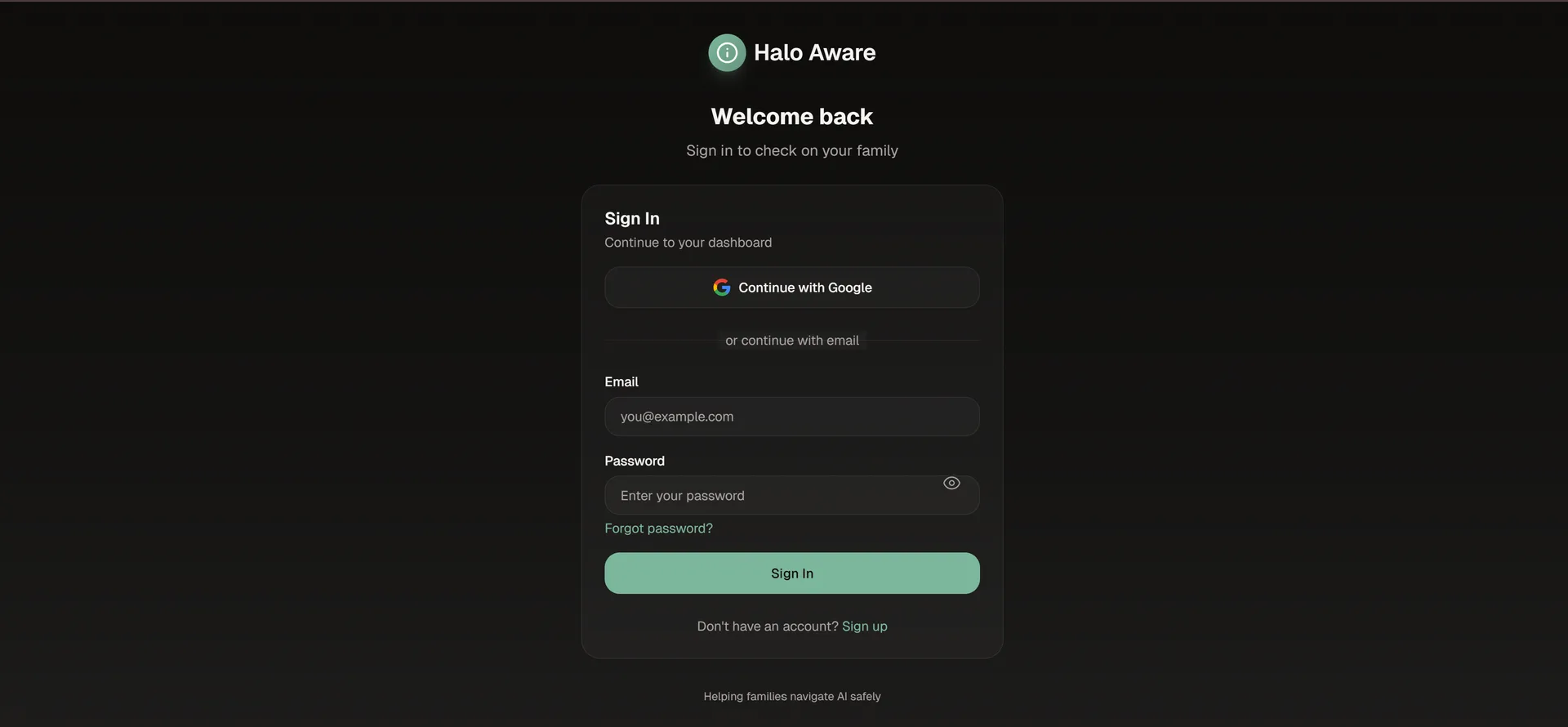

That’s why we built the Halo Aware Dashboard. Our technology acts as a silent partner, monitoring the background of AI interactions. You won't be flooded with data, but you will receive an immediate alert the moment a conversation touches on safeguarding concerns or topics that require a parent's touch.

AI is the future of learning and creativity. With the right guardrails in place, we can ensure our children explore that future safely.